AI tools for strategic awareness

This article was created by Forethought. Read the full article on our website.

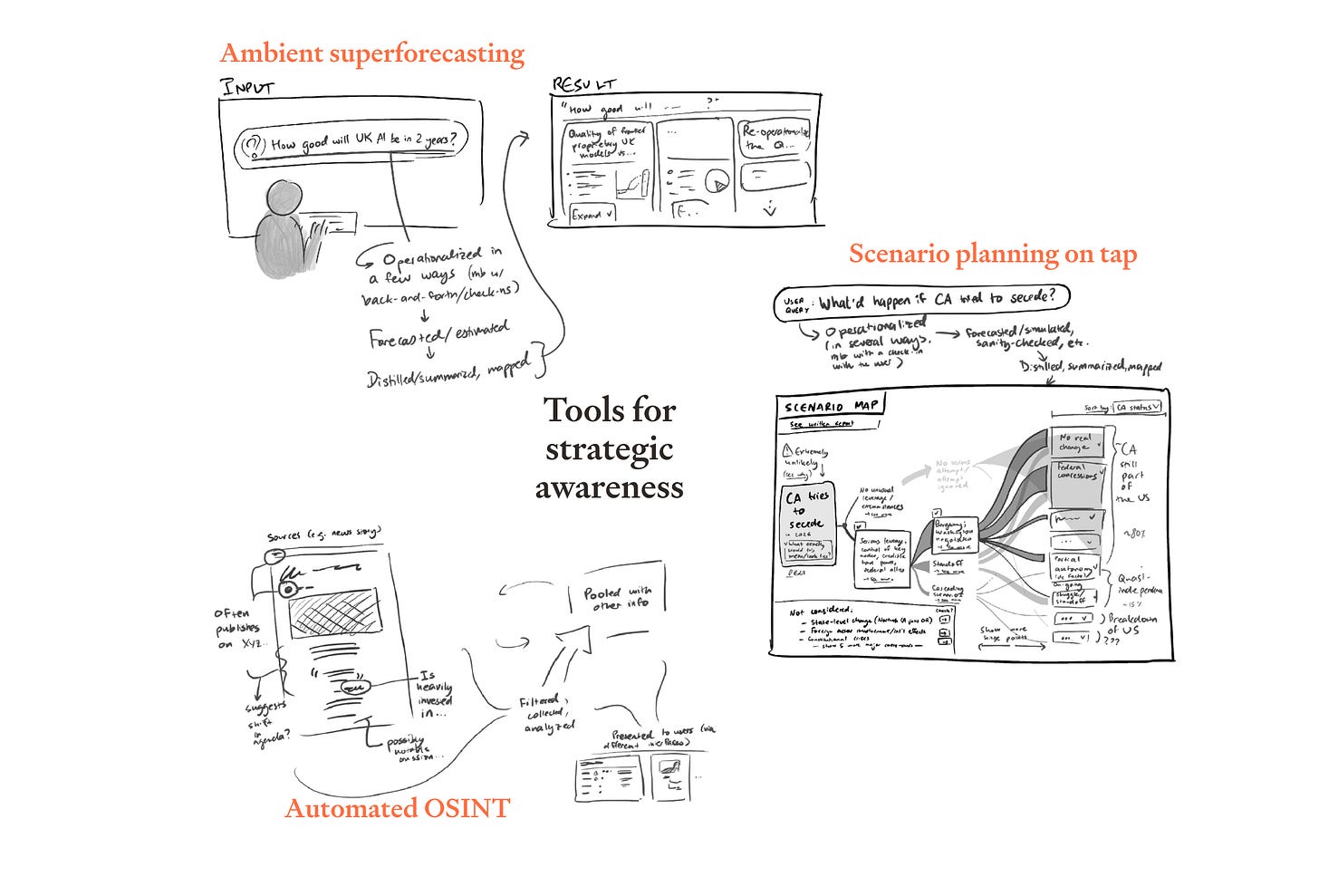

We’ve recently published a set of design sketches for tools for strategic awareness.

We think that near-term AI could help a wide variety of actors to have a more grounded and accurate perspective on their situation, and that this could be quite important:

Tools for strategic awareness could make individuals more epistemically empowered and better able to make decisions in their own best interests.

Better strategic awareness could help humanity to handle some of the big challenges that are heading towards us as we transition to more advanced AI systems.

We’re excited for people to build tools that help this happen, and hope that our design sketches will make this area more concrete, and inspire people to get started.

The (overly-)specific technologies we sketch out are:

Ambient superforecasting — When people want to know something about the future, they can run a query like a Google search, and get back a superforecaster-level assessment of likelihoods.

Scenario planning on tap — People can easily explore the likely implications of possible courses of actions, summoning up coherent grounded narratives about possible futures, and diving seamlessly into analysis of the implications of different hypotheticals.

Automated OSINT — Everyone has instant access to professional-grade political analysis; when someone does something self-serving, this will be transparent.

If you have ideas for how to implement these technologies, issues we may not have spotted, or visions for other tools in this space, we’d love to hear them.

This article was created by Forethought. Read the full article on our website.

Great post! As someone with experience in strategic foresight/scenario planning, I want to push back a little on the "scenario planning on tap" idea

tl;dr: value of the process often > value of the output

The outputs of scenario planning exercises are useful (how often people actually read them is a different question...) but the main value frequently comes from just getting a bunch of relevant people in the room and having them think about the future for a while.

If this process was automated away, you may:

1. Atrophy the skill of key decision-makers at creatively exploring possible futures

2. Reduce the subconscious/intuitive/emotional "closeness" of future generations in the minds of key decision-makers

3. Artificially narrow the range of options considered as humans with key contextual/tacit knowledge are no longer involved: "The computer has handled it, these are the 4 options"

(1.) and (2.) are true for both individual futures exercises and the broader process of upskilling people in anticipation of these exercises; in the UK civil service, for instance, many policymakers and analysts (especially seniors) get a fair bit of training in futures techniques. I'd be surprised if this didn't improve decision-making generally by making sure (senior) people have the future impacts of their day-to-day decisions closer to the forefront of their minds than they otherwise would. If that's no longer needed, the training will become an unnecessary expense, and the benefits may disappear.

None of the above is fatal, just something to be considered.